Did my inaugural cook in the 14.5″ Weber Smokey Mountain my lifepartner is giving me for my birthday (she knows I don’t like surprises).

Will probably post some written notes of my smoking endeavours, at some point. In the meantime, some pictures documenting the whole thing.

Category Archives: geekness

Using WordPress as a Syllabus Database: Learning is Fun

(More screenshots in a previous post on this blog.)

Worked on a WordPress project all night, the night before last. Was able to put together a preliminary version of a syllabus database that I’ve been meaning to build for an academic association with which I’m working.

There are some remaining bugs to solve but, I must say, I’m rather pleased with the results so far. In fact, I’ve been able to solve the most obvious bugs rather quickly, last night.

More importantly, I’ve learnt a lot. And I think I can build a lot of things on top of that learning experience.

Part of the inspiration comes from Kyle Jones’s blogpost about a “staff directory”. In addition, Justin Tadlock has had a large (and positive) impact on my learning process, either through his WordPress-related blogposts about custom post types and his work on the Hybrid Theme (especially through the amazing support forums). Not to mention WordCamp Montreal, official documentation, plugin pages, tutorials, and a lot of forum– and blogposts about diverse things surrounding WordPress (including CSS).

I got a lot of indirect help and I wouldn’t have been able to go very far in my project without that help. But, basically, it’s been a learning experience for me as an individual. I’m sure more skilled people would have been able to whip this up in no time.

Thing is, it’s been fun. Close to Csíkszentmihályi’s notion of “flow”. (Philippe’s a friend of mine who did research on flow and videogames. He’s the one who first introduced me to “flow”, in this sense.)

So, how did I achieve this? Well, through both plugins and theme files.

To create this database, I’ve originally been using three plugins from More Plugins: More Fields, More Taxonomies, and More Types. Had also done so in my previous attempt at a content database. At the time, these plugins helped me in several ways. But, with the current WordPress release (3.2.1), the current versions of these plugins (2.0.5.2, 1.0.1, and 1.1.1b1, respectively) are a bit buggy.

In fact, I ended up coding my custom taxonomies “from scratch”, after running into apparent problems with the More Taxonomies plugin. Eventually did the same thing with my “Syllabus” post type, replacing More Types. Wasn’t very difficult and it solved some rather tricky bugs.

Naïvely, I thought that the plugins’ export function would actually create that code, so I’d be able to put it in my own files and get rid of that plugin. But it’s not the case. Doh! Unfortunately, the support forums don’t seem so helpful either, with many questions left unanswered. So I wouldn’t really recommend these plugins apart from their pedagogical value.

The plugins were useful in helping me get around some “conceptual” issues, but it seems safer and more practical to code things from scratch, at least with taxonomies and custom post types. For “custom metaboxes”, I’m not sure I’ll have as easy a time replacing More Fields as I did replacing More Taxonomies and More Types. (More Fields helps create custom fields in the post editing interface.)

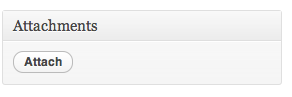

Besides the More Plugins, I’m only using two other plugins: Jonathan Christopher’s Attachments and the very versatile google doc embedder (gde) by Kevin Davis.

Attachments provides an easy way to attach files to a post and, importantly, its plugin page provides usable notes about implementation which greatly helped me in my learning process. I think I could code in some of that plugin’s functionality, now that I get a better idea of how WordPress attachments work. But it seems not to be too buggy so I’ll probably keep it.

As its name does not imply, gde can embed any file from a rather large array of file types: Adobe Reader (PDF), Microsoft Office (doc/docx, ppt/pptx/pps, xsl/xslx), and iWork Pages, along with multipage image files (tiff, Adobe Illustrator, Photoshop, SVG, EPS/PS…). The file format support comes from Google Docs Viewer (hence the plugin name).

In fact, I just realized that GDV supports zip and RAR archives. Had heard (from Gina Trapani) of that archive support in Gmail but didn’t realize it applied to GDV. Tried displaying a zip file through gde, last night, and it didn’t work. Posted something about this on the plugin’s forum and “k3davis” already fixed this, mentioning me in the 2.2 release notes.

Allowing the display of archives might be very useful, in this case. It’s fairly easily to get people to put files in a zip archive and upload it. In fact, several mail clients do all of this automatically, so there’s probably a way to get documents through emailed zip files and display the content along with the syllabus.

So, a cool plugin became cooler.

[gview file=”http://blog.enkerli.com/files/2011/08/syllabusdb-0.2.zip” height=”20%”]

As it so happens, gde is already installed on the academic site for which I’m building this very same syllabus database. In that case, I’ve been using gde to embed PDF files (for instance, in this page providing web enhancements page for an article in the association’s journal). So I knew it could be useful in terms of displaying course outlines and such, within individual pages of the syllabus database.

What I wasn’t sure I could do is programmatically embed files added to a syllabus page. In other words, I knew I could display these files using some shortcode on appropriate files’ URLs (including those of attached files). What I wasn’t sure how to do (and had a hard time figuring out) is how to send these URLs from a field in the database: I knew how to manually enter the code, but I didn’t know how to automatically display the results of the code when a link is entered in the right place.

The reason this matters is that I would like “normal human beings” (i.e., noncoders and, mostly, nongeeks) to enter the relevant information for their syllabi. One of WordPress’s advantages is the fact that, despite its power, it’s very easy to get nongeeks to do neat things with it. I’d like the syllabus database to be this type of neat thing.

The Attachmentsplugin helps, but still isn’t completely ideal. It does allow for drag-and-drop upload and it does provide a minimalist interface for attaching uploaded files to blogposts.

In the first case, it’s just a matter of clicking the Attach button and dropping a file in the appropriate field. In the second case, it’s a matter of clicking another Attachbutton.

The problem is between these two Attach buttons.

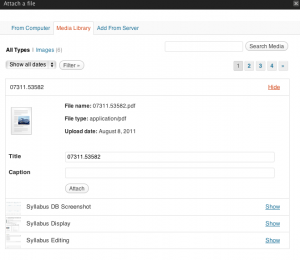

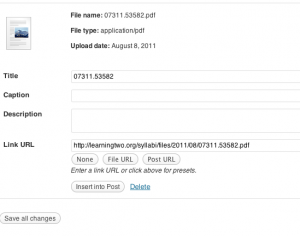

The part of the process between uploading the file and finding the Attach button takes several nonobvious steps. After the file has been uploaded, the most obvious buttons are Insert into Post and Save all changes, neither of which sounds particularly useful in this context. But Save all changes is the one which should be clicked.

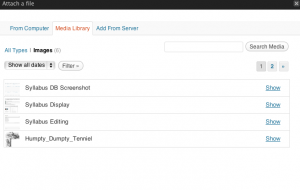

To get to the second Attach button, I first need to go to the Media Library a second time. Recently uploaded images are showing.

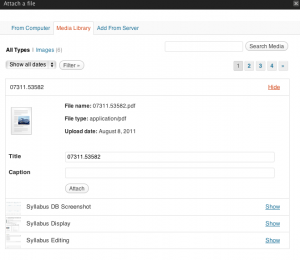

For other types of files, I then click All Types, which shows a reverse chronological list of all recently uploaded files (older files can be found through the Search Media field). I then click on the Show link associated with a given file (most likely, the most recent upload, which is the first in the list).

Then, finally, the final Attach button shows up.

Clicking it, the file is attached to the current post, which was the reason behind the whole process. Thanks to both gde and Attachments, that file is then displayed along with the rest of the syllabus entry.

It only takes a matter of seconds to minutes, to attach a file (depending on filesize, connection speed, etc.). Not that long. And the media library can be very useful in many ways. But I just imagine myself explaining the process to instructors and other people submitting syllabi for inclusion the the database.

Far from ideal.

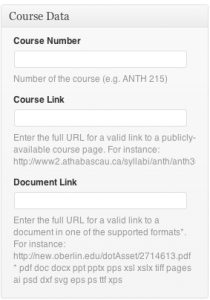

A much easier process is the one of adding files by pasting a file URL in a field. Which is exactly what I’ve added as a possibility for a syllabus’s main document (say, the PDF version of the syllabus).

Passing that URL to gde, I can automatically display the document in the document page, as I’m doing with attachments from the media library. The problem with this, obviously, is that it requires a public URL for the document. The very same “media library” can be used to upload documents. In fact, copying the URL from an uploaded file is easier than finding the “Attach” button as explained previously. But it makes the upload a separate process on the main site. A process which can be taught fairly easily, but a process which isn’t immediately obvious.

I might make use of a DropBox account for just this kind of situation. It’s also a separate process, but it’s one which may be easier for some people.

In the end, I’ll have to see with users what makes the most sense for them.

In the past, I’ve used plugins like Contact Form 7 (CF7), by Takayuki Miyoshi, and Fast Secure Contact Form (FSCF) by Mike Challis to try and implement something similar. A major advantage is that they allow for submissions by users who aren’t logged in. This might be a dealmaking feature for either FSCF or CF7, as I don’t necessarily want to create accounts for everyone who might submit a syllabus. Had issues with user registration, in the past. Like attachments, onboarding remains an issue for a lot of people. Also, thanks to yet other plugins like Michael Simpson’s Contact Form to Database (CFDB), it should be possible to make form submissions into pending items in the syllabus database. I’ll be looking into this.

Another solution might be Gravity Forms. Unlike the plugins I’ve mentioned so far, it’s a commercial product. But it sounds like it might offer some rather neat features which may make syllabus submission a much more interesting process. However, it’s meant for a very different use case, which has more to do with “lead data management” and other business-focused usage. I could innovate through its use. But there might be more appropriate solutions.

As is often the case with WordPress, the “There’s a plugin for that” motto can lead to innovation. Even documenting the process (by blogging it) can be a source of neat ideas.

A set of ideas I’ve had, for this syllabus database, came from looking into the Pods CMS Framework for WordPress. Had heard about Pods CMS through the WordCast Conversations podcast. For several reasons, it sent me on an idea spree and, for days, I was taking copious notes about what could be done. Not only about this syllabus database but about a full “learning object repository” built on top of WordPress. The reason I want to use WordPress is that, not only am I a “fanboi” of Automattic (the organization behind WordPress) but I readily plead guilty to using WordPress as a Golden Hammer. There are multiple ways to build a learning object repository. (Somehow, I’m convinced that some of my Web developing friends that Ruby on Rails is the ideal solution.) But I’ve got many of my more interesting ideas through looking into Pods CMS, a framework for WordPress and I don’t know the first thing about RoR.

Overall, Pods CMS sounds like a neat approach. Its pros and cons make it sound like an interesting alternative to WordPress’s custom post types for certain projects, as well as a significant shift from the main ways WordPress is used. During WordCamp Montreal, people I asked about it were wary of Pods. I eventually thought I would wait for version 2.0 to come out before investing significant effort in it.

In the meantime, what I’ve built is a useful base knowledge of how to use WordPress as a content database.

Can’t wait to finish adding features and fixing bugs, so I can release it to the academic organization. I’m sure they’ll enjoy it.

Even if they don’t ever use it, I’ve gained a lot of practical insight into how to do such things. It may be obvious to others but it does wonders to my satisfaction levels.

I’m truly in flow!

Intimacy, Network Effect, Hype

Is “intimacy” a mere correlate of the network effect?

Can we use the network effect to explain what has been happening with Quora?

Is the Quora hype related to network effect?

I really don’t feel a need to justify my dislike of Quora. Oh, sure, I can explain it. At length. Even on Quora itself. And elsewhere. But I tend to sense some defensiveness on the part of Quora fans.

[Speaking of fans, I have blogposts on fanboism laying in my head, waiting to be hatched. Maybe this will be part of it.]

But the important point, to me, isn’t about whether or not I like Quora. It’s about what makes Quora so divisive. There are people who dislike it and there are some who defend it.

Originally, I was only hearing from contacts and friends who just looooved Quora. So I was having a “Ionesco moment”: why is it that seemingly “everyone” who uses it loves Quora when, to me, it represents such a move in the wrong direction? Is there something huge I’m missing? Or has that world gone crazy?

It was a surreal experience.

And while I’m all for surrealism, I get this strange feeling when I’m so unable to understand a situation. It’s partly a motivation for delving into the issue (I’m surely not the only ethnographer to get this). But it’s also unsettling.

And, for Quora at least, this phase seems to be over. I now think I have a good idea as to what makes for such a difference in people’s experiences with Quora.

It has to do with the network effect.

I’m sure some Quora fanbois will disagree, but it’s now such a clear picture in my mind that it gets me into the next phase. Which has little to do with Quora itself.

The “network effect” is the kind of notion which is so commonplace that few people bother explaining it outside of introductory courses (same thing with “group forming” in social psychology and sociology, or preferential marriage patterns in cultural anthropology). What someone might call (perhaps dismissively): “textbook stuff.”

I’m completely convinced that there’s a huge amount of research on the network effect, but I’m also guessing few people looking it up. And I’m accusing people, here. Ever since I first heard of it (in 1993, or so), I’ve rarely looked at explanations of it and I actually don’t care about the textbook version of the concept. And I won’t “look it up.” I’m more interested in diverse usage patterns related to the concept (I’m a linguistic anthropologist).

So, the version I first heard (at a time when the Internet was off most people’s radar) was something like: “in networked technology, you need critical mass for the tools to become truly useful. For instance, the telephone has no use if you’re the only one with one and it has only very limited use if you can only call a single person.” Simple to the point of being simplistic, but a useful reminder.

Over the years, I’ve heard and read diverse versions of that same concept, usually in more sophisticated form, but usually revolving around the same basic idea that there’s a positive effect associated with broader usage of some networked technology.

I’m sure specialists have explored every single implication of this core idea, but I’m not situating myself as a specialist of technological networks. I’m into social networks, which may or may not be associated with technology (however defined). There are social equivalents of the “network effect” and I know some people are passionate about those. But I find that it’s quite limiting to focus so exclusively on quantitative aspects of social networks. What’s so special about networks, in a social science perspective, isn’t scale. Social scientists are used to working with social groups at any scale and we’re quite aware of what might happen at different scales. But networks are fascinating because of different features they may have. We may gain a lot when we think of social networks as acephalous, boundless, fluid, nameless, indexical, and impactful. [I was actually lecturing about some of this in my “Intro to soci” course, yesterday…]

So, from my perspective, “network effect” is an interesting concept when talking about networked technology, in part because it relates to the social part of those networks (innovation happens mainly through technological adoption, not through mere “invention”). But it’s not really the kind of notion I’d visit regularly.

This case is somewhat different. I’m perceiving something rather obvious (and which is probably discussed extensively in research fields which have to do with networked technology) but which strikes me as missing from some discussions of social networking systems online. In a way, it’s so obvious that it’s kind of difficult to explain.

But what’s coming up in my mind has to do with a specific notion of “intimacy.” It’s actually something which has been on my mind for a while and it might still need to “bake” a bit longer before it can be shared properly. But, like other University of the Streets participants, I perceive the importance of sharing “half-baked thoughts.”

And, right now, I’m thinking of an anecdotal context which may get the point across.

Given my attendance policy, there are class meetings during which a rather large proportion of the class is missing. I tend to call this an “intimate setting,” though I’m aware that it may have different connotations to different people. From what I can observe, people in class get the point. The classroom setting is indeed changing significantly and it has to do with being more “intimate.”

Not that we’re necessarily closer to one another physically or intellectually. It needs not be a “bonding experience” for the situation to be interesting. And it doesn’t have much to do with “absolute numbers” (a classroom with 60 people is relatively intimate when the usual attendance is close to 100; a classroom with 30 people feels almost overwhelming when only 10 people were showing up previously). But there’s some interesting phenomenon going on when there are fewer people than usual, in a classroom.

Part of this phenomenon may relate to motivation. In some ways, one might expect that those who are attending at that point are the “most dedicated students” in the class. This might be a fairly reasonable assumption in the context of a snowstorm but it might not work so well in other contexts (say, when the incentive to “come to class” relates to extrinsic motivation). So, what’s interesting about the “intimate setting” isn’t necessarily that it brings together “better people.” It’s that something special goes on.

What’s going on, with the “intimate classroom,” can vary quite a bit. But there’s still “something special” about it. Even when it’s not a bonding experience, it’s still a shared experience. While “communities of practice” are fascinating, this is where I tend to care more about “communities of experience.” And, again, it doesn’t have much to do with scale and it may have relatively little to do with proximity (physical or intellectual). But it does have to do with cognition and communication. What is special with the “intimate classroom” has to do with shared assumptions.

Going back to Quora…

While an online service with any kind of network effect is still relatively new, there’s something related to the “intimate setting” going on. In other words, it seems like the initial phase of the network effect is the “intimacy” phase: the service has a “large enough userbase” to be useful (so, it’s achieved a first type of critical mass) but it’s still not so “large” as to be overwhelming.

During that phase, the service may feel to people like a very welcoming place. Everyone can be on a “first-name basis. ” High-status users mingle with others as if there weren’t any hierarchy. In this sense, it’s a bit like the liminal phase of a rite of passage, during which communitas is achieved.

This phase is a bit like the Golden Age for an online service with a significant “social dimension.” It’s the kind of time which may make people “wax nostalgic about the good ole days,” once it’s over. It’s the time before the BYT comes around.

Sure, there’s a network effect at stake. You don’t achieve much of a “sense of belonging” by yourself. But, yet again, it’s not really a question of scale. You can feel a strong bond in a dyad and a team of three people can perform quite well. On the other hand, the cases about which I’m thinking are orders of magnitude beyond the so-called “Dunbar number” which seems to obsess so many people (outside of anthro, at least).

Here’s where it might get somewhat controversial (though similar things have been said about Quora): I’d argue that part of this “intimacy effect” has to do with a sense of “exclusivity.” I don’t mean this as the way people talk about “elitism” (though, again, there does seem to be explicit elitism involved in Quora’s case). It’s more about being part of a “select group of people.” About “being there at the time.” It can get very elitist, snobbish, and self-serving very fast. But it’s still about shared experiences and, more specifically, about the perceived boundedness of communities of experience.

We all know about early adopters, of course. And, as part of my interest in geek culture, I keep advocating for more social awareness in any approach to the adoption part of social media tools. But what I mean here isn’t about a “personality type” or about the “attributes of individual actors.” In fact, this is exactly a point at which the study of social networks starts deviating from traditional approaches to sociology. It’s about the special type of social group the “initial userbase” of such a service may represent.

From a broad perspective (as outsiders, say, or using the comparativist’s “etic perspective”), that userbase is likely to be rather homogeneous. Depending on the enrollment procedure for the service, the structure of the group may be a skewed version of an existing network structure. In other words, it’s quite likely that, during that phase, most of the people involved were already connected through other means. In Quora’s case, given the service’s pushy overeagerness on using Twitter and Facebook for recruitment, it sounds quite likely that many of the people who joined Quora could already be tied through either Twitter or Facebook.

Anecdotally, it’s certainly been my experience that the overwhelming majority of people who “follow me on Quora” have been part of my first degree on some social media tool in the recent past. In fact, one of my main reactions as I’ve been getting those notifications of Quora followers was: “here are people with whom I’ve been connected but with whom I haven’t had significant relationships.” In some cases, I was actually surprised that these people would “follow” me while it appeared like they actually weren’t interested in having any kind of meaningful interactions. To put it bluntly, it sometimes appeared as if people who had been “snubbing” me were suddenly interested in something about me. But that was just in the case of a few people I had unsuccessfully tried to engage in meaningful interactions and had given up thinking that we might not be that compatible as interlocutors. Overall, I was mostly surprised at seeing the quick uptake in my follower list, which doesn’t tend to correlate with meaningful interaction, in my experience.

Now that I understand more about the unthinking way new Quora users are adding people to their networks, my surprise has transformed into an additional annoyance with the service. In a way, it’s a repeat of the time (what was it? 2007?) when Facebook applications got their big push and we kept receiving those “app invites” because some “social media mar-ke-tors” had thought it wise to force people to “invite five friends to use the service.” To Facebook’s credit (more on this later, I hope), these pushy and thoughtless “invitations” are a thing of the past…on those services where people learnt a few lessons about social networks.

Perhaps interestingly, I’ve had a very similar experience with Scribd, at about the same time. I was receiving what seemed like a steady flow of notifications about people from my first degree online network connecting with me on Scribd, whether or not they had ever engaged in a meaningful interaction with me. As with Quora, my initial surprise quickly morphed into annoyance. I wasn’t using any service much and these meaningless connections made it much less likely that I would ever use these services to get in touch with new and interesting people. If most of the people who are connecting with me on Quora and Scribd are already in my first degree and if they tend to be people I have limited interactions, why would I use these services to expand the range of people with whom I want to have meaningful interactions? They’re already within range and they haven’t been very communicative (for whatever reason, I don’t actually assume they were consciously snubbing me). Investing in Quora for “networking purposes” seemed like a futile effort, for me.

Perhaps because I have a specific approach to “networking.”

In my networking activities, I don’t focus on either “quantity” or “quality” of the people involved. I seriously, genuinely, honestly find something worthwhile in anyone with whom I can eventually connect, so the “quality of the individuals” argument doesn’t work with me. And I’m seriously, genuinely, honestly not trying to sell myself on a large market, so the “quantity” issue is one which has almost no effect on me. Besides, I already have what I consider to be an amazing social network online, in terms of quality of interactions. Sure, people with whom I interact are simply amazing. Sure, the size of my first degree network on some services is “well above average.” But these things wouldn’t matter at all if I weren’t able to have meaningful interactions in these contexts. And, as it turns out, I’m lucky enough to be able to have very meaningful interactions in a large range of contexts, both offline and on. Part of it has to do with the fact that I’m teaching addict. Part of it has to do with the fact that I’m a papillon social (social butterfly). It may even have to do with a stage in my life, at which I still care about meeting new people but I don’t really need new people in my circle. Part of it makes me much less selective than most other people (I like to have new acquaintances) and part of it makes me more selective (I don’t need new “friends”). If it didn’t sound condescending, I’d say it has to do with maturity. But it’s not about my own maturity as a human being. It’s about the maturity of my first-degree network.

There are other people who are in an expansionist phase. For whatever reason (marketing and job searches are the best-known ones, but they’re really not the only ones), some people need to get more contacts and/or contacts with people who have some specific characteristics. For instance, there are social activists out there who need to connect to key decision-makers because they have a strong message to carry. And there are people who were isolated from most other people around them because of stigmatization who just need to meet non-judgmental people. These, to me, are fine goals for someone to expand her or his first-degree network.

Some of it may have to do with introversion. While extraversion is a “dominant trait” of mine, I care deeply about people who consider themselves introverts, even when they start using it as a divisive label. In fact, that’s part of the reason I think it’d be neat to hold a ShyCamp. There’s a whole lot of room for human connection without having to rely on devices of outgoingness.

So, there are people who may benefit from expansion of their first-degree network. In this context, the “network effect” matters in a specific way. And if I think about “network maturity” in this case, there’s no evaluation involved, contrary to what it may seem like.

As you may have noticed, I keep insisting on the fact that we’re talking about “first-degree network.” Part of the reason is that I was lecturing about a few key network concepts just yesterday so, getting people to understand the difference between “the network as a whole” (especially on an online service) and “a given person’s first-degree network” is important to me. But another part relates back to what I’m getting to realize about Quora and Scribd: the process of connecting through an online service may have as much to do with collapsing some degrees of separation than with “being part of the same network.” To use Granovetter’s well-known terms, it’s about transforming “weak ties” into “strong” ones.

And I specifically don’t mean it as a “quality of interaction.” What is at stake, on Quora and Scribd, seems to have little to do with creating stronger bonds. But they may want to create closer links, in terms of network topography. In a way, it’s a bit like getting introduced on LinkedIn (and it corresponds to what biz-minded people mean by “networking”): you care about having “access” to that person, but you don’t necessarily care about her or him, personally.

There’s some sense in using such an approach on “utilitarian networks” like professional or Q&A ones (LinkedIn does both). But there are diverse ways to implement this approach and, to me, Quora and Scribd do it in a way which is very precisely counterproductive. The way LinkedIn does it is context-appropriate. So is the way Academia.edu does it. In both of these cases, the “transaction cost” of connecting with someone is commensurate with the degree of interaction which is possible. On Scribd and Quora, they almost force you to connect with “people you already know” and the “degree of interaction” which is imposed on users is disproportionately high (especially in Quora’s case, where a contact of yours can annoy you by asking you personally to answer a specific question). In this sense, joining Quora is a bit closer to being conscripted in a war while registering on Academia.edu is just a tiny bit more like getting into a country club. The analogies are tenuous but they probably get the point across. Especially since I get the strong impression that the “intimacy phase” has a lot to do with the “country club mentality.”

See, the social context in which these services gain much traction (relatively tech-savvy Anglophones in North America and Europe) assign very negative connotations to social exclusion but people keep being fascinating by the affordances of “select clubs” in terms of social capital. In other words, people may be very vocal as to how nasty it would be if some people had exclusive access to some influential people yet there’s what I perceive as an obsession with influence among the same people. As a caricature: “The ‘human rights’ movement leveled the playing field and we should never ever go back to those dark days of Old Boys’ Clubs and Secret Societies. As soon as I become the most influential person on the planet, I’ll make sure that people who think like me get the benefits they deserve.”

This is where the notion of elitism, as applied specifically to Quora but possibly expanding to other services, makes the most sense. “Oh, no, Quora is meant for everyone. It’s Democratic! See? I can connect with very influential people. But, isn’t it sad that these plebeians are coming to Quora without a proper knowledge of the only right way to ask questions and without proper introduction by people I can trust? I hate these n00bz! Even worse, there are people now on the service who are trying to get social capital by promoting themselves. The nerve on these people, to invade my own dedicated private sphere where I was able to connect with the ‘movers and shakers’ of the industry.” No wonder Quora is so journalistic.

But I’d argue that there’s a part of this which is a confusion between first-degree networks and connection. Before Quora, the same people were indeed connected to these “influential people,” who allegedly make Quora such a unique system. After all, they were already online and I’m quite sure that most of them weren’t more than three or four degrees of separation from Quora’s initial userbase. But access to these people was difficult because connections were indirect. “Mr. Y Z, the CEO of Company X was already in my network, since there were employees of Company X who were connected through Twitter to people who follow me. But I couldn’t just coldcall CEO Z to ask him a question, since CEOs are out of reach, in their caves. Quora changed everything because Y responded to a question by someone ‘totally unconnected to him’ so it’s clear, now, that I have direct access to my good ol’ friend Y’s inner thoughts and doubts.”

As RMS might say, this type of connection is a “seductive mirage.” Because, I would argue, not much has changed in terms of access and whatever did change was already happening all over this social context.

At the risk of sounding dismissive, again, I’d say that part of what people find so alluring in Quora is “simply” an epiphany about the Small World phenomenon. With all sorts of fallacies caught in there. Another caricature: “What? It takes only three contacts for me to send something from rural Idaho to the head honcho at some Silicon Valley firm? This is the first time something like this happens, in the History of the Whole Wide World!”

Actually, I do feel quite bad about these caricatures. Some of those who are so passionate about Quora, among my contacts, have been very aware of many things happening online since the early 1990s. But I have to be honest in how I receive some comments about Quora and much of it sounds like a sudden realization of something which I thought was a given.

The fact that I feel so bad about these characterizations relates to the fact that, contrary to what I had planned to do, I’m not linking to specific comments about Quora. Not that I don’t want people to read about this but I don’t want anyone to feel targeted. I respect everyone and my characterizations aren’t judgmental. They’re impressionistic and, again, caricatures.

Speaking of what I had planned, beginning this post… I actually wanted to talk less about Quora specifically and more about other issues. Sounds like I’m currently getting sidetracked, and it’s kind of sad. But it’s ok. The show must go on.

So, other services…

While I had a similar experiences with Scribd and Quora about getting notifications of new connections from people with whom I haven’t had meaningful interactions, I’ve had a very different experience on many (probably most) other services.

An example I like is Foursquare. “Friendship requests” I get on Foursquare are mostly from: people with whom I’ve had relatively significant interactions in the past, people who were already significant parts of my second-degree network, or people I had never heard of. Sure, there are some people with whom I had tried to establish connections, including some who seem to reluctantly follow me on Quora. But the proportion of these is rather minimal and, for me, the stakes in accepting a friend request on Foursquare are quite low since it’s mostly about sharing data I already share publicly. Instead of being able to solicit my response to a specific question, the main thing my Foursquare “friends” can do that others can’t is give me recommendations, tips, and “notifications of their presence.” These are all things I might actually enjoy, so there’s nothing annoying about it. Sure, like any online service with a network component, these days, there are some “friend requests” which are more about self-promotion. But those are usually easy to avoid and, even if I get fooled by a “social media mar-ke-tor,” the most this person may do to me is give usrecommendation about “some random place.” Again, easy to avoid. So, the “social network” dimension of Foursquare seems appropriate, to me. Not ideal, but pretty decent.

I never really liked the “game” aspect and while I did play around with getting badges and mayorships in my first few weeks, it never felt like the point of Foursquare, to me. As Foursquare eventually became mainstream in Montreal and I was asked by a journalist about my approach to Foursquare, I was exactly in the phase when I was least interested in the game aspect and wished we could talk a whole lot more about the other dimensions of the phenomenon.

And I realize that, as I’m saying this, I may sound to some as exactly those who are bemoaning the shift out of the initial userbase of some cherished service. But there are significant differences. Note that I’m not complaining about the transition in the userbase. In the Foursquare context, “the more the merrier.” I was actually glad that Foursquare was becoming mainstream as it was easier to explain to people, it became more connected with things business owners might do, and generally had more impact. What gave me pause, at the time, is the journalistic hype surrounding Foursquare which seemed to be missing some key points about social networks online. Besides, I was never annoyed by this hype or by Foursquare itself. I simply thought that it was sad that the focus would be on a dimension of the service which was already present on not only Dodgeball and other location-based services but, pretty much, all over the place. I was critical of the seemingly unthinking way people approached Foursquare but the service itself was never that big a deal for me, either way.

And I pretty much have the same attitude toward any tool. I happen to have my favourites, which either tend to fit neatly in my “workflow” or otherwise have some neat feature I enjoy. But I’m very wary of hype and backlash. Especially now. It gets old very fast and it’s been going for quite a while.

Maybe I should just move away from the “tech world.” It’s the context for such hype and buzz machine that it almost makes me angry. [I very rarely get angry.] Why do I care so much? You can say it’s accumulation, over the years. Because I still care about social media and I really do want to know what people are saying about social media tools. I just wish discussion of these tools weren’t soooo “superlative”…

Obviously, I digress. But this is what I like to do on my blog and it has a cathartic effect. I actually do feel better now, thank you.

And I can talk about some other things I wanted to mention. I won’t spend much time on them because this is long enough (both as a blogpost and as a blogging session). But I want to set a few placeholders, for further discussion.

One such placeholder is about some pet theories I have about what worked well with certain services. Which is exactly the kind of thing “social media entrepreneurs” and journalists are so interested in, but end up talking about the same dimensions.

Let’s take Twitter, for instance. Sure, sure, there’s been a lot of talk about what made Twitter a success and probably-everybody knows that it got started as a side-project at Odeo, and blah, blah, blah. Many people also realize that there were other microblogging services around as Twitter got traction. And I’m sure some people use Twitter as a “textbook case” of “network effect” (however they define that effect). I even mention the celebrity dimensions of the “Twitter phenomenon” in class (my students aren’t easily starstruck by Bieber and Gaga) and I understand why journalists are so taken by Twitter’s “broadcast” mission. But something which has been discussed relatively rarely is the level of responsiveness by Twitter developers, over the years, to people’s actual use of the service. Again, we all know that “@-replies,” “hashtags,” and “retweets” were all emerging usage patterns that Twitter eventually integrated. And some discussion has taken place when Twitter changed it’s core prompt to reflect the fact that the way people were using it had changed. But there’s relatively little discussion as to what this process implies in terms of “developing philosophy.” As people are still talking about being “proactive” (ugh!) with users, and crude measurements of popularity keep being sold and bandied about, a large part of the tremendous potential for responsiveness (through social media or otherwise) is left untapped. People prefer to hype a new service which is “likely to have Twitter-like success because it has the features users have said they wanted in the survey we sell.” Instead of talking about the “get satisfaction” effect in responsiveness. Not that “consumers” now have “more power than ever before.” But responsive developers who refrain from imposing their views (Quora, again) tend to have a more positive impact, socially, than those which are merely trying to expand their userbase.

Which leads me to talk about Facebook. I could talk for hours on end about Facebook, but I almost feel afraid to do so. At this point, Facebook is conceived in what I perceive to be such a narrow way that it seems like anything I might say would sound exceedingly strange. Given the fact that it was part one of the first waves of Web tools with explicit social components to reach mainstream adoption, it almost sounds “historical” in timeframe. But, as so many people keep saying, it’s just not that old. IMHO, part of the implication of Facebook’s relatively young age should be that we are able to discuss it as a dynamic process, instead of assigning it to a bygone era. But, whatever…

Actually, I think part of the reason there’s such lack of depth in discussing Facebook is also part of the reason it was so special: it was originally a very select service. Since, for a significant period of time, the service was only available to people with email addresses ending in “.edu,” it’s not really surprising that many of the people who keep discussing it were actually not on the service “in its formative years.” But, I would argue, the fact that it was so exclusive at first (something which is often repeated but which seems to be understood in a very theoretical sense) contributed quite significantly to its success. Of course, similar claims have been made but, I’d say that my own claim is deeper than others.

[Bang! I really don’t tend to make claims so, much of this blogpost sounds to me as if it were coming from somebody else…]

Ok, I don’t mean it so strongly. But there’s something I think neat about the Facebook of 2005, the one I joined. So I’d like to discuss it. Hence the placeholder.

And, in this placeholder, I’d fit: the ideas about responsiveness mentioned with Twitter, the stepwise approach adopted by Facebook (which, to me, was the real key to its eventual success), the notion of intimacy which is the true core of this blogpost, the notion of hype/counterhype linked to journalistic approaches, a key distinction between privacy and intimacy, some non-ranting (but still rambling) discussion as to what Google is missing in its “social” projects, anecdotes about “sequential network effects” on Facebook as the service reached new “populations,” some personal comments about what I get out of Facebook even though I almost never spent any significant amount of time on it, some musings as to the possibility that there are online services which have reached maturity and may remain stable in the foreseeable future, a few digressions about fanboism or about the lack of sophistication in the social network models used in online services, and maybe a bit of fun at the expense of “social media expert marketors”…

But that’ll be for another time.

Cheers!

WordPress as Content Directory: Getting Somewhere

{I tend to ramble a bit. If you just want a step-by-step tutorial, you can skip to here.}

Woohoo!

I feel like I’ve reached a milestone in a project I’ve had in mind, ever since I learnt about Custom Post Types in WordPress 3.0: Using WordPress as a content directory.

The concept may not be so obvious to anyone else, but it’s very clear to me. And probably much clearer for anyone who has any level of WordPress skills (I’m still a kind of WP newbie).

Basically, I’d like to set something up through WordPress to make it easy to create, review, and publish entries in content databases. WordPress is now a Content Management System and the type of “content management” I’d like to enable has to do with something of a directory system.

Why WordPress? Almost glad you asked.

These days, several of the projects on which I work revolve around WordPress. By pure coincidence. Or because WordPress is “teh awsum.” No idea how representative my sample is. But I got to work on WordPress for (among other things): an academic association, an adult learners’ week, an institute for citizenship and social change, and some of my own learning-related projects.

There are people out there arguing about the relative value of WordPress and other Content Management Systems. Sometimes, WordPress may fall short of people’s expectations. Sometimes, the pro-WordPress rhetoric is strong enough to sound like fanboism. But the matter goes beyond marketshare, opinions, and preferences.

In my case, WordPress just happens to be a rather central part of my life, these days. To me, it’s both a question of WordPress being “the right tool for the job” and the work I end up doing being appropriate for WordPress treatment. More than a simple causality (“I use WordPress because of the projects I do” or “I do these projects because I use WordPress”), it’s a complex interaction which involves diverse tools, my skillset, my social networks, and my interests.

Of course, WordPress isn’t perfect nor is it ideal for every situation. There are cases in which it might make much more sense to use another tool (Twitter, TikiWiki, Facebook, Moodle, Tumblr, Drupal..). And there are several things I wish WordPress did more elegantly (such as integrating all dimensions in a single tool). But I frequently end up with WordPress.

Here are some things I like about WordPress:

- Free Software ethos.

- Neat “.org vs .com” model for Open Source blogging software and freemium blogging service.

- Broad user and support communities around both the software and the service.

- WordCamps are a useful way to deepen knowledge of the software (and, to an extent, the service).

- The software is remarkably flexible.

- The service has some neat features, both free and paid.

- The software is easy to install on just about any webhost.

- The service is free in cost and headaches.

- Both software and service offer nice learning curves.

This last one is where the choice of WordPress for content directories starts making the most sense. Not only is it easy for me to use and build on WordPress but the learning curves are such that it’s easy for me to teach WordPress to others.

A nice example is the post editing interface (same in the software and service). It’s powerful, flexible, and robust, but it’s also very easy to use. It takes a few minutes to learn and is quite sufficient to do a lot of work.

This is exactly where I’m getting to the core idea for my content directories.

I emailed the following description to the digital content editor for the academic organization for which I want to create such content directories:

You know the post editing interface? What if instead of editing posts, someone could edit other types of contents, like syllabi, calls for papers, and teaching resources? What if fields were pretty much like the form I had created for [a committee]? What if submissions could be made by people with a specific role? What if submissions could then be reviewed by other people, with another role? What if display of these items were standardised?

Not exactly sure how clear my vision was in her head, but it’s very clear for me. And it came from different things I’ve seen about custom post types in WordPress 3.0.

For instance, the following post has been quite inspiring:

I almost had a drift-off moment.

But I wasn’t able to wrap my head around all the necessary elements. I perused and read a number of things about custom post types, I tried a few things. But I always got stuck at some point.

Recently, a valuable piece of the puzzle was provided by Kyle Jones (whose blog I follow because of his work on WordPress/BuddyPress in learning, a focus I share).

Setting up a Staff Directory using WordPress Custom Post Types and Plugins | The Corkboard.

As I discussed in the comments to this post, it contained almost everything I needed to make this work. But the two problems Jones mentioned were major hurdles, for me.

After reading that post, though, I decided to investigate further. I eventually got some material which helped me a bit, but it still wasn’t sufficient. Until tonight, I kept running into obstacles which made the process quite difficult.

Then, while trying to solve a problem I was having with Jones’s code, I stumbled upon the following:

Rock-Solid WordPress 3.0 Themes using Custom Post Types | Blancer.com Tutorials and projects.

This post was useful enough that I created a shortlink for it, so I could have it on my iPad and follow along: http://bit.ly/RockSolidCustomWP

By itself, it might not have been sufficient for me to really understand the whole process. And, following that tutorial, I replaced the first bits of code with use of the neat plugins mentioned by Jones in his own tutorial: More Types, More Taxonomies, and More Fields.

I played with this a few times but I can now provide an actual tutorial. I’m now doing the whole thing “from scratch” and will write down all steps.

This is with the WordPress 3.0 blogging software installed on a Bluehost account. (The WordPress.com blogging service doesn’t support custom post types.) I use the default Twenty Ten theme as a parent theme.

Since I use WordPress Multisite, I’m creating a new test blog (in Super Admin->Sites, “Add New”). Of course, this wasn’t required, but it helps me make sure the process is reproducible.

Since I already installed the three “More Plugins” (but they’re not “network activated”) I go in the Plugins menu to activate each of them.

I can now create the new “Product” type, based on that Blancer tutorial. To do so, I go to the “More Types” Settings menu, I click on “Add New Post Type,” and I fill in the following information: post type names (singular and plural) and the thumbnail feature. Other options are set by default.

I also set the “Permalink base” in Advanced settings. Not sure it’s required but it seems to make sense.

I click on the “Save” button at the bottom of the page (forgot to do this, the last time).

I then go to the “More Fields” settings menu to create a custom box for the post editing interface.

I add the box title and change the “Use with post types” options (no use in having this in posts).

(Didn’t forget to click “save,” this time!)

I can now add the “Price” field. To do so, I need to click on the “Edit” link next to the “Product Options” box I just created and add click “Add New Field.”

I add the “Field title” and “Custom field key”:

I set the “Field type” to Number.

I also set the slug for this field.

I then go to the “More Taxonomies” settings menu to add a new product classification.

I click “Add New Taxonomy,” and fill in taxonomy names, allow permalinks, add slug, and show tag cloud.

I also specify that this taxonomy is only used for the “Product” type.

(Save!)

Now, the rest is more directly taken from the Blancer tutorial. But instead of copy-paste, I added the files directly to a Twenty Ten child theme. The files are available in this archive.

Here’s the style.css code:

/* Theme Name: Product Directory Theme URI: http://enkerli.com/ Description: A product directory child theme based on Kyle Jones, Blancer, and Twenty Ten Author: Alexandre Enkerli Version: 0.1 Template: twentyten */ @import url("../twentyten/style.css");

The code for functions.php:

<!--?php /** * ProductDir functions and definitions * @package WordPress * @subpackage Product_Directory * @since Product Directory 0.1 */ /*Custom Columns*/ add_filter("manage_edit-product_columns", "prod_edit_columns"); add_action("manage_posts_custom_column", "prod_custom_columns"); function prod_edit_columns($columns){ $columns = array( "cb" =--> "<input type="\"checkbox\"" />",

"title" => "Product Title",

"description" => "Description",

"price" => "Price",

"catalog" => "Catalog",

);

return $columns;

}

function prod_custom_columns($column){

global $post;

switch ($column)

{

case "description":

the_excerpt();

break;

case "price":

$custom = get_post_custom();

echo $custom["price"][0];

break;

case "catalog":

echo get_the_term_list($post->ID, 'catalog', '', ', ','');

break;

}

}

?>

And the code in single-product.php:

<!--?php /** * Template Name: Product - Single * The Template for displaying all single products. * * @package WordPress * @subpackage Product_Dir * @since Product Directory 1.0 */ get_header(); ?--> <div id="container"> <div id="content"> <!--?php the_post(); ?--> <!--?php $custom = get_post_custom($post--->ID); $price = "$". $custom["price"][0]; ?> <div id="post-<?php the_ID(); ?><br />">> <h1 class="entry-title"><!--?php the_title(); ?--> - <!--?=$price?--></h1> <div class="entry-meta"> <div class="entry-content"> <div style="width: 30%; float: left;"> <!--?php the_post_thumbnail( array(100,100) ); ?--> <!--?php the_content(); ?--></div> <div style="width: 10%; float: right;"> Price <!--?=$price?--></div> </div> </div> </div> <!-- #content --></div> <!-- #container --> <!--?php get_footer(); ?-->

That’s it!

Well, almost..

One thing is that I have to activate my new child theme.

So, I go to the “Themes” Super Admin menu and enable the Product Directory theme (this step isn’t needed with single-site WordPress).

I then activate the theme in Appearance->Themes (in my case, on the second page).

One thing I’ve learnt the hard way is that the permalink structure may not work if I don’t go and “nudge it.” So I go to the “Permalinks” Settings menu:

And I click on “Save Changes” without changing anything. (I know, it’s counterintuitive. And it’s even possible that it could work without this step. But I spent enough time scratching my head about this one that I find it important.)

Now, I’m done. I can create new product posts by clicking on the “Add New” Products menu.

I can then fill in the product details, using the main WYSIWYG box as a description, the “price” field as a price, the “featured image” as the product image, and a taxonomy as a classification (by clicking “Add new” for any tag I want to add, and choosing a parent for some of them).

Now, in the product management interface (available in Products->Products), I can see the proper columns.

Here’s what the product page looks like:

And I’ve accomplished my mission.

The whole process can be achieved rather quickly, once you know what you’re doing. As I’ve been told (by the ever-so-helpful Justin Tadlock of Theme Hybrid fame, among other things), it’s important to get the data down first. While I agree with the statement and its implications, I needed to understand how to build these things from start to finish.

In fact, getting the data right is made relatively easy by my background as an ethnographer with a strong interest in cognitive anthropology, ethnosemantics, folk taxonomies (aka “folksonomies“), ethnography of communication, and ethnoscience. In other words, “getting the data” is part of my expertise.

The more technical aspects, however, were a bit difficult. I understood most of the principles and I could trace several puzzle pieces, but there’s a fair deal I didn’t know or hadn’t done myself. Putting together bits and pieces from diverse tutorials and posts didn’t work so well because it wasn’t always clear what went where or what had to remain unchanged in the code. I struggled with many details such as the fact that Kyle Jones’s code for custom columns wasn’t working first because it was incorrectly copied, then because I was using it on a post type which was “officially” based on pages (instead of posts). Having forgotten the part about “touching” the Permalinks settings, I was unable to get a satisfying output using Jones’s explanations (the fact that he doesn’t use titles didn’t really help me, in this specific case). So it was much harder for me to figure out how to do this than it now is for me to build content directories.

I still have some technical issues to face. Some which are near essential, such as a way to create archive templates for custom post types. Other issues have to do with features I’d like my content directories to have, such as clearly defined roles (the “More Plugins” support roles, but I still need to find out how to define them in WordPress). Yet other issues are likely to come up as I start building content directories, install them in specific contexts, teach people how to use them, observe how they’re being used and, most importantly, get feedback about their use.

But I’m past a certain point in my self-learning journey. I’ve built my confidence (an important but often dismissed component of gaining expertise and experience). I found proper resources. I understood what components were minimally necessary or required. I succeeded in implementing the system and testing it. And I’ve written enough about the whole process that things are even clearer for me.

And, who knows, I may get feedback, questions, or advice..

Installing BuddyPress on a Webhost

[Jump here for more technical details.]

A few months ago, I installed BuddyPress on my Mac to try it out. It was a bit of an involved process, so I documented it:

WordPress MU, BuddyPress, and bbPress on Local Machine « Disparate.

More recently, I decided to get a webhost. Both to run some tests and, eventually, to build something useful. BuddyPress seems like a good way to go at it, especially since it’s improved a lot, in the past several months.

In fact, the installation process is much simpler, now, and I ran into some difficulties because I was following my own instructions (though adapting the process to my webhost). So a new blogpost may be in order. My previous one was very (possibly too) detailed. This one is much simpler, technically.

One thing to make clear is that BuddyPress is a set of plugins meant for WordPress µ (“WordPress MU,” “WPMU,” “WPµ”), the multi-user version of the WordPress blogging platform. BP is meant as a way to make WPµ more “social,” with such useful features as flexible profiles, user-to-user relationships, and forums (through bbPress, yet another one of those independent projects based on WordPress).

While BuddyPress depends on WPµ and does follow a blogging logic, I’m thinking about it as a social platform. Once I build it into something practical, I’ll probably use the blogging features but, in a way, it’s more of a tool to engage people in online social activities. BuddyPress probably doesn’t work as a way to “build a community” from scratch. But I think it can be quite useful as a way to engage members of an existing community, even if this engagement follows a blogger’s version of a Pareto distribution (which, hopefully, is dissociated from elitist principles).

But I digress, of course. This blogpost is more about the practical issue of adding a BuddyPress installation to a webhost.

Webhosts have come a long way, recently. Especially in terms of shared webhosting focused on LAMP (or PHP/MySQL, more specifically) for blogs and content-management. I don’t have any data on this, but it seems to me that a lot of people these days are relying on third-party webhosts instead of relying on their own servers when they want to build on their own blogging and content-management platforms. Of course, there’s a lot more people who prefer to use preexisting blog and content-management systems. For instance, it seems that there are more bloggers on WordPress.com than on other WordPress installations. And WP.com blogs probably represent a small number of people in comparison to the number of people who visit these blogs. So, in a way, those who run their own WordPress installations are a minority in the group of active WordPress bloggers which, itself, is a minority of blog visitors. Again, let’s hope this “power distribution” not a basis for elite theory!

Yes, another digression. I did tell you to skip, if you wanted the technical details!

I became part of the “self-hosted WordPress” community through a project on which I started work during the summer. It’s a website for an academic organization and I’m acting as the organization’s “Web Guru” (no, I didn’t choose the title). The site was already based on WordPress but I was rebuilding much of it in collaboration with the then-current “Digital Content Editor.” Through this project, I got to learn a lot about WordPress, themes, PHP, CSS, etc. And it was my first experience using a cPanel- (and Fantastico-)enabled webhost (BlueHost, at the time). It’s also how I decided to install WordPress on my local machine and did some amount of work from that machine.

But the local installation wasn’t an ideal solution for two reasons: a) I had to be in front of that local machine to work on this project; and b) it was much harder to show the results to the person with whom I was collaborating.

So, in the Fall, I decided to get my own staging server. After a few quick searches, I decided HostGator, partly because it was available on a monthly basis. Since this staging server was meant as a temporary solution, HG was close to ideal. It was easy to set up as a PayPal “subscription,” wasn’t that expensive (9$/month), had adequate support, and included everything that I needed at that point to install a current version of WordPress and play with theme files (after importing content from the original site). I’m really glad I made that decision because it made a number of things easier, including working from different computers, and sending links to get feedback.

While monthly HostGator fees were reasonable, it was still a more expensive proposition than what I had in mind for a longer-term solution. So, recently, a few weeks after releasing the new version of the organization’s website, I decided to cancel my HostGator subscription. A decision I made without any regret or bad feeling. HostGator was good to me. It’s just that I didn’t have any reason to keep that account or to do anything major with the domain name I was using on HG.

Though only a few weeks elapsed since I canceled that account, I didn’t immediately set out to transition to a new webhost. I didn’t go from HostGator to another webhost.

But having my own webhost still remained at the back of my mind as something which might be useful. For instance, while not really making a staging server necessary, a new phase in the academic website project brought up a sandboxing idea. Also, I went to a “WordPress Montreal” meeting and got to think about further WordPress development/deployment, including using BuddyPress for my own needs (both as my own project and as a way to build my own knowledge of the platform) instead of it being part of an organization’s project. I was also thinking about other interesting platforms which necessitate a webhost.

(More on these other platforms at a later point in time. Bottom line is, I’m happy with the prospects.)

So I wanted a new webhost. I set out to do some comparison shopping, as I’m wont to do. In my (allegedly limited) experience, finding the ideal webhost is particularly difficult. For one thing, search results are cluttered with a variety of “unuseful” things such as rants, advertising, and limited comparisons. And it’s actually not that easy to give a new webhost a try. For one thing, these hosting companies don’t necessarily have the most liberal refund policies you could imagine. And, switching a domain name between different hosts and registrars is a complicated process through which a name may remain “hostage.” Had I realized what was involved, I might have used a domain name to which I have no attachment or actually eschewed the whole domain transition and just try the webhost without a dedicated domain name.

At any rate, I had a relatively hard time finding my webhost.

I really didn’t need “bells and whistles.” For instance, all the AdSense, shopping cart, and other business-oriented features which seem to be publicized by most webhosting companies have no interest, to me.

I didn’t even care so much about absolute degree of reliability or speed. What I’m to do with this host is fairly basic stuff. The core idea is to use my own host to bypass some limitations. For instance, WordPress.com doesn’t allow for plugins yet most of the WordPress fun has to do with plugins.

I did want an “unlimited” host, as much as possible. Not because expect to have huge resource needs but I just didn’t want to have to monitor bandwidth.

I thought that my needs would be basic enough that any cPanel-enabled webhost would fit. As much as I could see, I needed FTP access to something which had PHP 5 and MySQL 5. I expected to install things myself, without use of the webhost’s scripts but I also thought the host would have some useful scripts. Although I had already registered the domain I wanted to use (through Name.com), I thought it might be useful to have a free domain in the webhosting package. Not that domain names are expensive, it’s more of a matter of convenience in terms of payment or setup.

I ended up with FatCow. But, honestly, I’d probably go with a different host if I were to start over (which I may do with another project).

I paid 88$ for two years of “unlimited” hosting, which is quite reasonable. And, on paper, FatCow has everything I need (and I bunch of things I don’t need). The missing parts aren’t anything major but have to do with minor annoyances. In other words, no real deal-breaker, here. But there’s a few things I wish I had realized before I committed on FatCow with a domain name I actually want to use.

Something which was almost a deal-breaker for me is the fact that FatCow requires payment for any additional subdomain. And these aren’t cheap: the minimum is 5$/month for five subdomains, up to 25$/month for unlimited subdomains! Even at a “regular” price of 88$/year for the basic webhosting plan, the “unlimited subdomains” feature (included in some webhosting plans elsewhere) is more than three times more expensive than the core plan.

As I don’t absolutely need extra subdomains, this is mostly a minor irritant. But it’s one reason I’ll probably be using another webhost for other projects.

Other issues with FatCow are probably not enough to motivate a switch.

For instance, the PHP version installed on FatCow (5.2.1) is a few minor releases behind the one needed by some interesting web applications. No biggie, especially if PHP is updated in a relatively reasonable timeframe. But still makes for a slight frustration.

The MySQL version seems recent enough, but it uses non-standard tools to manage it, which makes for some confusion. Attempting to create some MySQL databases with obvious names (say “wordpress”) fails because the database allegedly exists (even though it doesn’t show up in the MySQL administration). In the same vein, the URL of the MySQL is <username>.fatcowmysql.com instead of localhost as most installers seem to expect. Easy to handle once you realize it, but it makes for some confusion.

In terms of Fantastico-like simplified installation of webapps, FatCow uses InstallCentral, which looks like it might be its own Fantastico replacement. InstallCentral is decent enough as an installation tool and FatCow does provide for some of the most popular blog and CMS platforms. But, in some cases, the application version installed by FatCow is old enough (2005!) that it requires multiple upgrades to get to a current version. Compared to other installation tools, FatCow’s InstallCentral doesn’t seem really efficient at keeping track of installed and released versions.

Something which is partly a neat feature and partly a potential issue is the way FatCow handles Apache-related security. This isn’t something which is so clear to me, so I might be wrong.

Accounts on both BlueHost and HostGator include a public_html directory where all sorts of things go, especially if they’re related to publicly-accessible content. This directory serves as the website’s root, so one expects content to be available there. The “index.html” or “index.php” file in this directory serves as the website’s frontpage. It’s fairly obvious, but it does require that one would understand a few things about webservers. FatCow doesn’t seem to create a public_html directory in a user’s server space. Or, more accurately, it seems that the root directory (aka ‘/’) is in fact public_html. In this sense, a user doesn’t have to think about which directory to use to share things on the Web. But it also means that some higher-level directories aren’t available. I’ve already run into some issues with this and I’ll probably be looking for a workaround. I’m assuming there’s one. But it’s sometimes easier to use generally-applicable advice than to find a custom solution.

Further, in terms of access control… It seems that webapps typically make use of diverse directories and .htaccess files to manage some forms of access controls. Unix-style file permissions are also involved but the kind of access needed for a web app is somewhat different from the “User/Group/All” of Unix filesystems. AFAICT, FatCow does support those .htaccess files. But it has its own tools for building them. That can be a neat feature, as it makes it easier, for instance, to password-protect some directories. But it could also be the source of some confusion.

There are other issues I have with FatCow, but it’s probably enough for now.

So… On to the installation process… 😉

It only takes a few minutes and is rather straightforward. This is the most verbose version of that process you could imagine…

Surprised? 😎

Disclaimer: I’m mostly documenting how I did it and there are some things about which I’m unclear. So it may not work for you. If it doesn’t, I may be able to help but I provide no guarantee that I will. I’m an anthropologist, not a Web development expert.

As always, YMMV.

A few instructions here are specific to FatCow, but the general process is probably valid on other hosts.

I’m presenting things in a sequence which should make sense. I used a slightly different order myself, but I think this one should still work. (If it doesn’t, drop me a comment!)

In these instructions, straight quotes (“”) are used to isolate elements from the rest of the text. They shouldn’t be typed or pasted.

I use “example.com” to refer to the domain on which the installation is done. In my case, it’s the domain name I transfered to FatCow from another registrar but it could probably be done without a dedicated domain (in which case it would be “<username>.fatcow.com” where “<username>” is your FatCow username).

I started with creating a MySQL database for WordPress MU. FatCow does have phpMyAdmin but the default tool in the cPanel is labeled “Manage MySQL.” It’s slightly easier to use for creating new databases than phpMyAdmin because it creates the database and initial user (with confirmed password) in a single, easy-to-understand dialog box.

- WordPress MU (wordpress-mu-2.9.1.1.zip, in my case)

- Buddymatic (buddymatic.0.9.6.3.1.zip, in my case)

- EarlyMorning (only one version, it seems)

- EarlyMorning-BP (only one version, it seems)

Only the WordPress MU archive is needed to install BuddyPress. The last three files are needed for EarlyMorning, a BuddyPress theme that I found particularly neat. It’s perfectly possible to install BuddyPress without this specific theme. (Although, doing so, you need to install a BuddyPress-compatible theme, if only by moving some folders to make the default theme available, as I explained in point 15 in that previous tutorial.) Buddymatic itself is a theme framework which includes some child themes, so you don’t need to install EarlyMorning. But installing it is easy enough that I’m adding instructions related to that theme.

These files can be uploaded anywhere in my FatCow space. I uploaded them to a kind of test/upload directory, just to make it clear, for me.

A major FatCow idiosyncrasy is its FileManager (actually called “FileManager Beta” in the documentation but showing up as “FileManager” in the cPanel). From my experience with both BlueHost and HostGator (two well-known webhosting companies), I can say that FC’s FileManager is quite limited. One thing it doesn’t do is uncompress archives. So I have to resort to the “Archive Gateway,” which is surprisingly slow and cumbersome.

At any rate, I used that Archive Gateway to uncompress the four files. WordPress µ first (in the root directory or “/”), then both Buddymatic and EarlyMorning in “/wordpress-mu/wp-content/themes” (you can chose the output directory for zip and tar files), and finally EarlyMorning-BP (anywhere, individual files are moved later). To uncompress each file, select it in the dropdown menu (it can be located in any subdirectory, Archive Gateway looks everywhere), add the output directory in the appropriate field in the case of Buddymatic or EarlyMorning, and press “Extract/Uncompress”. Wait to see a message (in green) at the top of the window saying that the file has been uncompressed successfully.

Then, in the FileManager, the contents of the EarlyMorning-BP directory have to be moved to “/wordpress-mu/wp-content/themes/earlymorning”. (Thought they could be uncompressed there directly, but it created an extra folder.) To move those files in the FileManager, I browse to that earlymorning-bp directory, click on the checkbox to select all, click on the “Move” button (fourth from right, marked with a blue folder), and add the output path: /wordpress-mu/wp-content/themes/earlymorning

These files are tweaks to make the EarlyMorning theme work with BuddyPress.

Then, I had to change two files, through the FileManager (it could also be done with an FTP client).

One change is to EarlyMorning’s style.css:

/wordpress-mu/wp-content/themes/earlymorning/style.css

There, “Template: thematic” has to be changed to “Template: buddymatic” (so, “the” should be changed to “buddy”).

That change is needed because the EarlyMorning theme is a child theme of the “Thematic” WordPress parent theme. Buddymatic is a BuddyPress-savvy version of Thematic and this changes the child-parent relation from Thematic to Buddymatic.

The other change is in the Buddymatic “extensions”:

/wordpress-mu/wp-content/themes/buddymatic/library/extensions/buddypress_extensions.php

There, on line 39, “$bp->root_domain” should be changed to “bp_root_domain()”.

This change is needed because of something I’d consider a bug but that a commenter on another blog was kind enough to troubleshoot. Without this modification, the login button in BuddyPress wasn’t working because it was going to the website’s root (example.com/wp-login.php) instead of the WPµ installation (example.com/wordpress-mu/wp-login.php). I was quite happy to find this workaround but I’m not completely clear on the reason it works.

Then, something I did which might not be needed is to rename the “wordpress-mu” directory. Without that change, the BuddyPress installation would sit at “example.com/wordpress-mu,” which seems a bit cryptic for users. In my mind, “example.com/<name>,” where “<name>” is something meaningful like “social” or “community” works well enough for my needs. Because FatCow charges for subdomains, the “<name>.example.com” option would be costly.

(Of course, WPµ and BuddyPress could be installed in the site’s root and the frontpage for “example.com” could be the BuddyPress frontpage. But since I think of BuddyPress as an add-on to a more complete site, it seems better to have it as a level lower in the site’s hierarchy.)

With all of this done, the actual WPµ installation process can begin.

The first thing is to browse to that directory in which WPµ resides, either “example.com/wordpress-mu” or “example.com/<name>” with the “<name>” you chose. You’re then presented with the WordPress µ Installation screen.

Since FatCow charges for subdomains, it’s important to choose the following option: “Sub-directories (like example.com/blog1).” It’s actually by selecting the other option that I realized that FatCow restricted subdomains.

The Database Name, username and password are the ones you created initially with Manage MySQL. If you forgot that password, you can actually change it with that same tool.

An important FatCow-specific point, here, is that “Database Host” should be “<username>.fatcowmysql.com” (where “<username>” is your FatCow username). In my experience, other webhosts use “localhost” and WPµ defaults to that.

You’re asked to give a name to your blog. In a way, though, if you think of BuddyPress as more of a platform than a blogging system, that name should be rather general. As you’re installing “WordPress Multi-User,” you’ll be able to create many blogs with more specific names, if you want. But the name you’re entering here is for BuddyPress as a whole. As with <name> in “example.com/<name>” (instead of “example.com/wordpress-mu”), it’s a matter of personal opinion.

Something I noticed with the EarlyMorning theme is that it’s a good idea to keep the main blog’s name relatively short. I used thirteen characters and it seemed to fit quite well.

Once you’re done filling in this page, WPµ is installed in a flash. You’re then presented with some information about your installation. It’s probably a good idea to note down some of that information, including the full paths to your installation and the administrator’s password.