If indeed Apple plans to announce not just more affordable textbook options for students, but also more interactive, immersive ebook experiences…

Forecasting next week’s Apple education event (Dan Moren and Lex Friedman for Macworld)

I’m still in catchup mode (was sick during the break), but it’s hard to let this pass. It’s exactly the kind of thing I like to blog about: wishful thinking and speculation about education. Sometimes, my crazy predictions are fairly accurate. But my pleasure at blogging these things has little to do with the predictions game. I’m no prospectivist. I just like to build wishlists.

In this case, I’ll try to make it short. But I’m having drift-off moments just thinking about the possibilities. I do have a lot to say about this but we’ll see how things go.

Overall, I agree with the three main predictions in that MacWorld piece: Apple might come out with eBook creation tools, office software, and desktop reading solutions. I’m interested in all of these and have been thinking about the implications.

That MacWorld piece, like most media coverage of textbooks, these days, talks about the weight of physical textbooks as a major issue. It’s a common refrain and large bookbags/backpacks have symbolized a key problem with “education”. Moren and Friedman finish up with a zinger about lecturing. Also a common complaint. In fact, I’ve been on the record (for a while) about issues with lecturing. Which is where I think more reflection might help.

For one thing, alternative models to lecturing can imply more than a quip about the entertainment value of teaching. Inside the teaching world, there’s a lot of talk about the notion that teaching is a lot more than providing access to content. There’s a huge difference between reading a book and taking a class. But it sounds like this message isn’t heard and that there’s a lot of misunderstanding about the role of teaching.

It’s quite likely that Apple’s announcement may make things worse.

I don’t like textbooks but I do use them. I’m not the only teacher who dislikes textbook while still using them. But I feel the need to justify myself. In fact, I’ve been on the record about this. So, in that context, I think improvements in textbooks may distract us from a bigger issue and even lead us in the wrong direction. By focusing even more on content-creation, we’re commodifying education. What’s more, we’re subsuming education to a publishing model. We all know how that’s going. What’s tragic, IMHO, is that textbook publishers themselves are going in the direction of magazines! If, ten years from now, people want to know when we went wrong with textbook publishing, it’ll probably be a good idea for them to trace back from now. In theory, magazine-style textbooks may make a lot of sense to those who perceive learning to be indissociable from content consumption. I personally consider these magazine-style textbooks to be the most egregious of aberrations because, in practice, learning is radically different from content consumption.

So… If, on Thursday, Apple ends up announcing deals with textbook publishers to make it easier for them to, say, create and distribute free ad-supported magazine-style textbooks, I’ll be going through a large range of very negative emotions. Coming out of it, I might perceive a silverlining in the fact that these things can fairly easily be subverted. I like this kind of technological subversion and it makes me quite enthusiastic.

In fact, I’ve had this thought about iAd producer (Apple’s tool for creating mobile ads). Never tried it but, when I heard about it, it sounded like something which could make it easy to produce interactive content outside of mobile advertising. I don’t think the tool itself is restricted to Apple’s iAd, but I could see how the company might use the same underlying technology to create some content-creation tool.

“But,” you say, “you just said that you think learning isn’t about content.” Quite so. I’m not saying that I think these tools should be the future of learning. But creating interactive content can be part of something wider, which does relate to learning.

The point isn’t that I don’t like content. The point is that I don’t think content should be the exclusive focus of learning. To me, allowing textbook publishers to push more magazine-style content more easily is going in the wrong direction. Allowing diverse people (including learners and teachers) to easily create interactive content might in fact be a step in the right direction. It’s nothing new, but it’s an interesting path.

In fact, despite my dislike of a content emphasis in learning, I’m quite interested in “learning objects”. In fact, I did a presentation about them during the Spirit of Inquiry conference at Concordia, a few years ago (PDF).

A neat (but Flash-based) example of a learning object was introduced to me during that same conference: Mouse Party. The production value is quite high, the learning content seems relatively high, and it’s easily accessible.

But it’s based on Flash.

Which leads me to another part of the issue: formats.

I personally try to avoid Flash as much as possible. While a large number of people have done amazing things with Flash, it’s my sincere (and humble) opinion that Flash’s time has come and gone. I do agree with Steve Jobs on this. Not out of fanboism (I’m no Apple fanboi), not because I have something against Adobe (I don’t), not because I have a vested interested in an alternative technology. I just think that mobile Flash isn’t going anywhere and that. Even on the desktop, I think Flash-free is the way to go. Never installed Flash on my desktop computer, since I bought it in July. I do run Chrome for the occasional Flash-only video. But Flash isn’t the only video format out there and I almost never come across interesting content which actually relies on something exclusive to Flash. Flash-based standalone apps (like Rdio and Machinarium) are a different issue as Flash was more of a development platform for them and they’re available as Flash-free apps on Apple’s own iOS.

I wouldn’t be surprised if Apple’s announcements had something to do with a platform for interactive content as an alternative to Adobe Flash. In fact, I’d be quite enthusiastic about that. Especially given Apple’s mobile emphasis. We might be getting further in “mobile computing for the rest of us”.

Part of this may be related to HTML5. I was quite enthusiastic when Tumult released its “Hype” HTML5-creation tool. I only used it to create an HTML5 version of my playfulness talk. But I enjoyed it and can see a lot of potential.

Especially in view of interactive content. It’s an old concept and there are many tools out there to create interactive content (from Apple’s own QuickTime to Microsoft PowerPoint). But the shift to interactive content has been slower than many people (including educational technologists) would have predicted. In other words, there’s still a lot to be done with interactive content. Especially if you think about multitouch-based mobile devices.

Which eventually brings me back to learning and teaching.

I don’t “teach naked”, I do use slides in class. In fact, my slides are mostly bullet points, something presentation specialists like to deride. Thing is, though, my slides aren’t really meant for presentation and, while they sure are “content”, I don’t really use them as such. Basically, I use them as a combination of cue cards, whiteboard, and coursenotes. Though I may sound defensive about this, I’m quite comfortable with my use of slides in the classroom.

Yet, I’ve been looking intently for other solutions.

For instance, I used to create outlines in OmniOutliner that I would then send to LaTeX to produce both slides and printable outlines (as PDFs). I’ve thought about using S5, but it doesn’t really fit in my workflow. So I end up creating Keynote files on my Mac, uploading them (as PowerPoint) before class, and using them in the classroom using my iPad. Not ideal, but rather convenient.

(Interestingly enough, the main thing I need to do today is create PowerPoint slides as ancillary material for a textbook.)

In all of these cases, the result isn’t really interactive. Sure, I could add buttons and interactive content to the slides. But the basic model is linear, not interactive. The reason I don’t feel bad about it is that my teaching is very interactive (the largest proportion of classtime is devoted to open discussions, even with 100-plus students). But I still wish I could have something more appropriate.

I have used other tools, especially whiteboarding and mindmapping ones. Basically, I elicit topics and themes from students and we discuss them in a semi-structured way. But flow remains an issue, both in terms of workflow and in terms of conversation flow.

So if Apple were to come up with tools making it easy to create interactive content, I might integrate them in my classroom work. A “killer feature” here is if interaction could be recorded during class and then uploaded as an interactive podcast (à la ProfCast).

Of course, content-creation tools might make a lot of sense outside the classroom. Not only could they help distribute the results of classroom interactions but they could help in creating learning material to be used ahead of class. These could include the aforementioned learning objects (like Mouse Party) as well as interactive quizzes (like Hot Potatoes) and even interactive textbooks (like Moglue) and educational apps (plenty of these in the App Store).

Which brings me back to textbooks, the alleged focus of this education event.

One of my main issues with textbooks, including online ones, is usability. I read pretty much everything online, including all the material for my courses (on my iPad) but I find CourseSmart and its ilk to be almost completely unusable. These online textbooks are, in my experience, much worse than scanned and OCRed versions of the same texts (in part because they don’t allow for offline access but also because they make navigation much more difficult than in GoodReader).

What I envision is an improvement over PDFs.

Part of the issue has to do with PDF itself. Despite all its benefits, Adobe’s “Portable Document Format” is the relic of a bygone era. Sure, it’s ubiquitous and can preserve formatting. It’s also easy to integrate in diverse tools. In fact, if I understand things correctly, PDF replaced Display PostScript as the basis for Quartz 2D, a core part of Mac OS X’s graphics rendering. But it doesn’t mean that it can’t be supplemented by something else.

Part of the improvement has to do with flexibility. Because of its emphasis on preserving print layouts, PDF tends to enforce print-based ideas. This is where EPUB is at a significant advantage. In a way, EPUB textbooks might be the first step away from the printed model.

From what I can gather, EPUB files are a bit like Web archives. Unlike PDFs, they can be reformatted at will, just like webpages can. In fact, iBooks and other EPUB readers (including Adobe’s, IIRC) allow for on-the-fly reformatting, which puts the reader in control of a much greater part of the reading experience. This is exactly the kind of thing publishers fail to grasp: readers, consumers, and users want more control on the experience. EPUB textbooks would thus be easier to read than PDFs.

EPUB is the basis for Apple’s iBooks and iBookstore and people seem to be assuming that Thursday’s announcement will be about iBooks. Makes sense and it’d be nice to see an improvement over iBooks. For one thing, it could support EPUB 3. There are conversion tools but, AFAICT, iBooks is stuck with EPUB 2.0. An advantage there is that EPUBs can possibly include scripts and interactivity. Which could make things quite interesting.

Interactive formats abound. In fact, PDFs can include some interactivity. But, as mentioned earlier, there’s a lot of room for improvement in interactive content. In part, creation tools could be “democratized”.

Which gets me thinking about recent discussions over the fate of HyperCard. While I understand John Gruber’s longstanding position, I find room for HyperCard-like tools. Like some others, I even had some hopes for ATX-based TileStack (an attempt to bring HyperCard stacks back to life, online). And I could see some HyperCard thinking in an alternative to both Flash and PDF.

“Huh?”, you ask?

Well, yes. It may sound strange but there’s something about HyperCard which could make sense in the longer term. Especially if we get away from the print model behind PDFs and the interaction model behind Flash. And learning objects might be the ideal context for this.

Part of this is about hyperlinking. It’s no secret that HyperCard was among HTML precursors. As the part of HTML which we just take for granted, hyperlinking is among the most undervalued features of online content. Sure, we understand the value of sharing links on social networking systems. And there’s a lot to be said about bookmarking. In fact, I’ve been thinking about social bookmarking and I have a wishlist about sharing tools, somewhere. But I’m thinking about something much more basic: hyperlinking is one of the major differences between online and offline wriiting.

Think about the differences between, say, a Wikibook and a printed textbook. My guess is that most people would focus on the writing style, tone, copy-editing, breadth, reviewing process, etc. All of these are relevant. In fact, my sociology classes came up with variations on these as disadvantages of the Wikibook over printed textbooks. Prior to classroom discussion about these differences, however, I mentioned several advantages of the Wikibook:

- Cover bases

- Straightforward

- Open Access

- Editable

- Linked

(Strangely enough, embedded content from iWork.com isn’t available and I can’t log into my iWork.com account. Maybe it has to do with Thursday’s announcement?)

That list of advantages is one I’ve been using since I started to use this Wikibook… excerpt for the last one. And this is one which hit me, recently, as being more important than the others.

So, in class, I talked about the value of links and it’s been on my mind quite a bit. Especially in view of textbooks. And critical thinking.

See, academic (and semi-academic) writing is based on references, citations, quotes. English-speaking academics are likely to be the people in the world of publishing who cite the most profusely. It’s not rare for a single paragraph of academic writing in English to contain ten citations or more, often stringed in parentheses (Smith 1999, 2005a, 2005b; Smith and Wesson 1943, 2010). And I’m not talking about Proust-style paragraphs either. I’m convinced that, with some quick searches, I could come up with a paragraph of academic writing which has less “narrative content” than citation.

Textbooks aren’t the most egregious example of what I’d consider over-citing. But they do rely on citations quite a bit. As I work more specifically on textbook content, I notice even more clearly the importance of citations. In fact, in my head, I started distinguishing some patterns in textbook content. For instance, there are sections which mostly contain direct explanations of key concepts while other sections focus on personal anecdotes from the authors or extended quotes from two sides of the debate. But one of the most obvious sections are summaries from key texts.

For instance (hypothetical example):

As Nora Smith explained in her 1968 study Coming Up with Something to Say, the concept of interpretation has a basis in cognition.

…

Smith (1968: 23) argued that Pierce’s interpretant had nothing to do with theatre.

These citations are less conspicuous than they’d be in peer-reviewed journals. But they’re a central part of textbook writing. One of their functions should be to allow readers (undergraduate students, mostly) to learn more about a topic. So, when a student wants to know more about Nora Smith’s reading of Pierce, she “just” have to locate Smith’s book, go to the right page, scan the text for the read for the name “Pierce”, and read the relevant paragraph. Nothing to it.

Compare this to, say, a blogpost. I only cite one text, here. But it’s linked instead of being merely cited. So readers can quickly know more about the context for what I’m discussing before going to the library.

Better yet, this other blogpost of mine is typical of what I’ve been calling a linkfest, a post containing a large number of links. Had I put citations instead of links, the “narrative” content of this post would be much less than the citations. Basically, the content was a list of contextualized links. Much textbook content is just like that.

In my experience, online textbooks are citation-heavy and take almost no benefit from linking. Oh, sure, some publisher may replace citations with links. But the result would still not be the same as writing meant for online reading because ex post facto link additions are quite different from link-enhanced writing. I’m not talking about technological determinism, here. I’m talking about appropriate tool use. Online texts can be quite different from printed ones and writing for an online context could benefit greatly from this difference.

In other words, I care less about what tools publishers are likely to use to create online textbooks than about a shift in the practice of online textbooks.

So, if Apple comes out with content-creation tools on Thursday (which sounds likely), here are some of my wishes:

- Use of open standards like HTML5 and EPUB (possibly a combination of the two).

- Completely cross-platform (should go without saying, but Apple’s track record isn’t that great, here).

- Open Access.

- Link library.

- Voice support.

- Mobile creation tools as powerful as desktop ones (more like GarageBand than like iWork).

- HyperCard-style emphasis on hyperlinked structures (à la “mini-site” instead of web archives).

- Focus on rich interaction (possibly based on the SproutCore web framework).

- Replacement for iWeb (which is being killed along with MobileMe).

- Ease creation of lecturecasts.

- Deep integration with iTunes U.

- Combination of document (à la Pages or Word), presentation (à la Keynote or PowerPoint), and standalone apps (à la The Elements or even Myst).

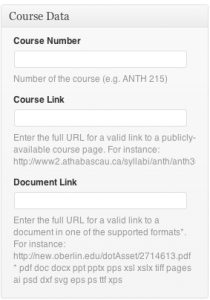

- Full support for course management systems.

- Integration of textbook material and ancillary material (including study guides, instructor manuals, testbanks, presentation files, interactive quizzes, glossaries, lesson plans, coursenotes, etc.).

- Outlining support (more like OmniOutliner or even like OneNote than like Keynote or Pages).

- Mindmapping support (unlikely, but would be cool).

- Whiteboard support (both in-class and online).

- Collaboration features (à la Adobe Connect).

- Support for iCloud (almost a given, but it opens up interesting possibilities).

- iWork integration (sounds likely, but still in my wishlist).

- Embeddable content (à la iWork.com).

- Stability, ease of use, and low-cost (i.e., not Adobe Flash or Acrobat).

- Better support than Apple currently provides for podcast production and publishing.

- More publisher support than for iBooks.

- Geared toward normal users, including learners and educators.

The last three are probably where the problem lies. It’s likely that Apple has courted textbook publishers and may have convinced them that they should up their game with online textbooks. It’s clear to me that publishers risk to fall into oblivion if they don’t wake up to the potential of learning content. But I sure hope the announcement goes beyond an agreement with publishers.

Rumour has it that part of the announcement might have to do with bypassing state certification processes, in the US. That would be a big headline-grabber because the issue of state certification is something of wedge issue. Could be interesting, especially if it means free textbooks (though I sure hope they won’t be ad-supported). But that’s much less interesting than what could be done with learning content.

“User-generated content” may be one of the core improvements in recent computing history, much of which is relevant for teaching. As fellow anthro Mike Wesch has said:

We’ll need to rethink a few things…

And Wesch sure has been thinking about learning.

Problem is, publishers and “user-generated content” don’t go well together. I’m guessing that it’s part of the reason for Apple’s insufficient support for “user-generated content”. For better or worse, Apple primarily perceives its users as consumers. In some cases, Apple sides with consumers to make publishers change their tune. In other cases, it seems to be conspiring with publishers against consumers. But in most cases, Apple fails to see its core users as content producers. In the “collective mind of Apple”, the “quality content” that people should care about is produced by professionals. What normal users do isn’t really “content”. iTunes U isn’t an exception, those of us who give lectures aren’t Apple’s core users (even though the education market as a whole has traditionally being an important part of Apple’s business). The fact that Apple courts us underlines the notion that we, teachers and publishers (i.e. non-students), are the ones creating the content. In other words, Apple supports the old model of publishing along with the old model of education. Of course, they’re far from alone in this obsolete mindframe. But they happen to have several of the tools which could be useful in rethinking education.

Thursday’s events is likely to focus on textbooks. But much more is needed to shift the balance between publishers and learners. Including a major evolution in podcasting.

Podcasting is especially relevant, here. I’ve often thought about what Apple could do to enhance podcasting for learning. Way beyond iTunes U. Into something much more interactive. And I don’t just mean “interactive content” which can be manipulated seamless using multitouch gestures. I’m thinking about the back-and-forth of learning and teaching, the conversational model of interactivity which clearly distinguishes courses from mere content.